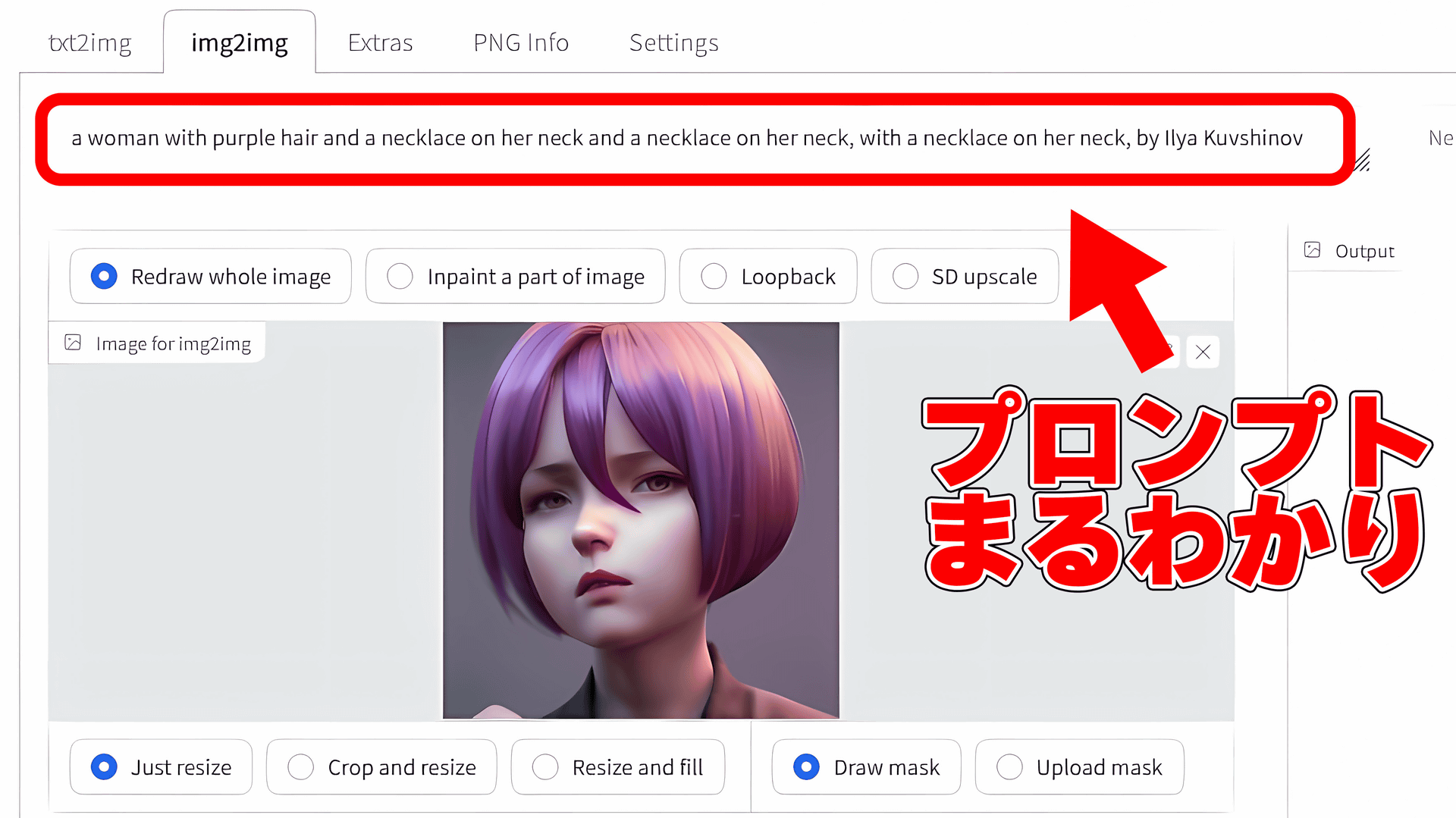

Nice looking hand I found. Learn to use CLIP retrieval, BLIP, etc. and understand how the model maps language to pixels. You will get better results. : r/StableDiffusion

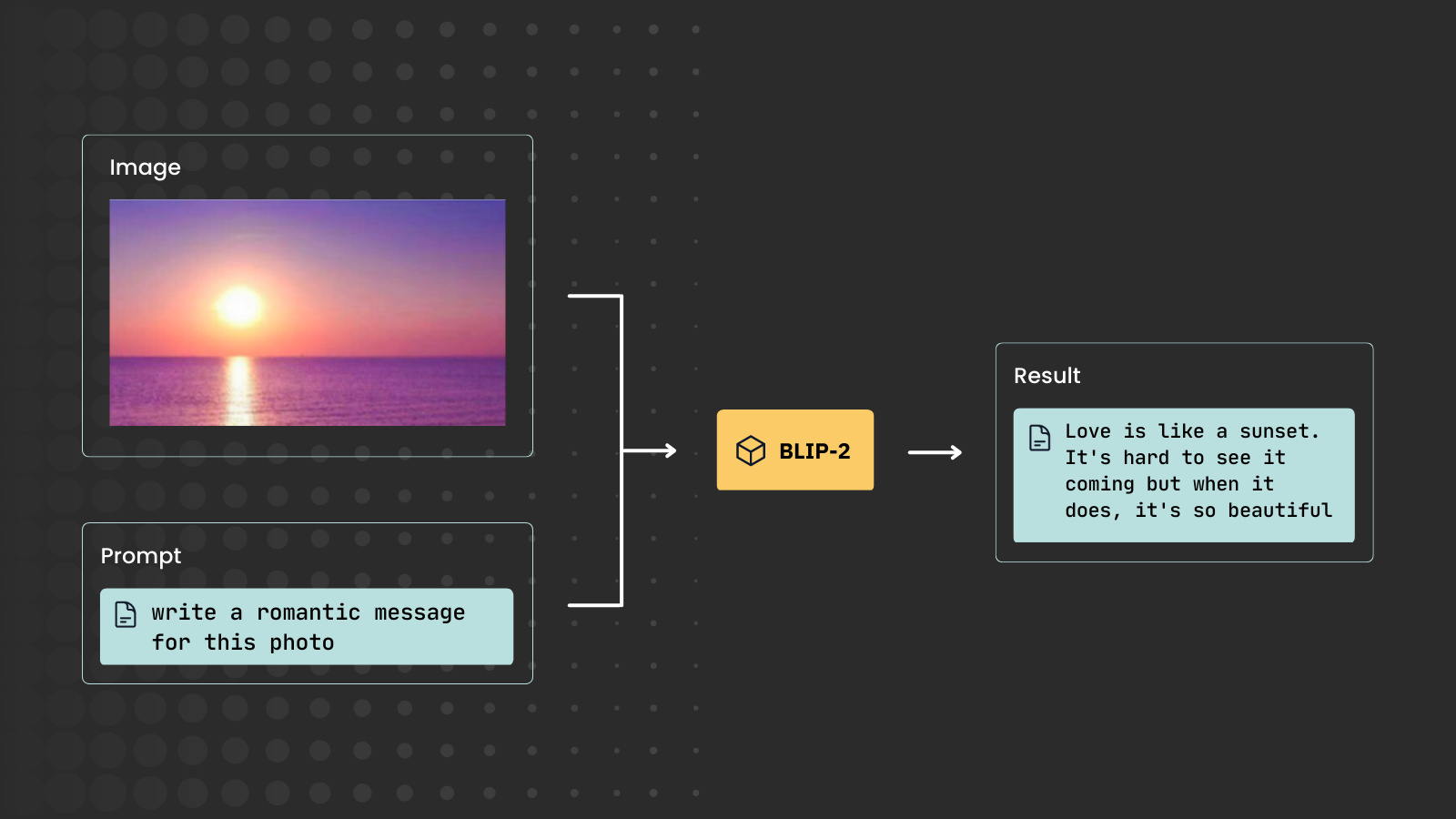

Image and text features extraction with BLIP and BLIP-2: how to build a multimodal search engine | by Enrico Randellini | Sep, 2023 | Medium

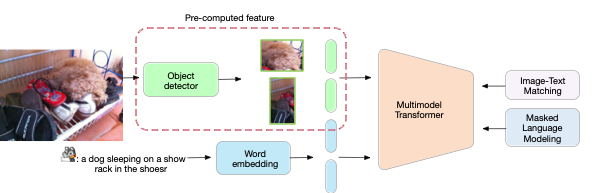

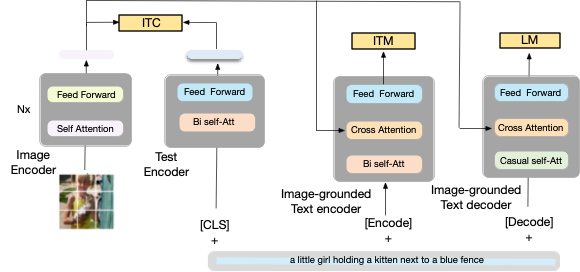

Paper Summary: BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation | by Ahmed Sabir | Medium

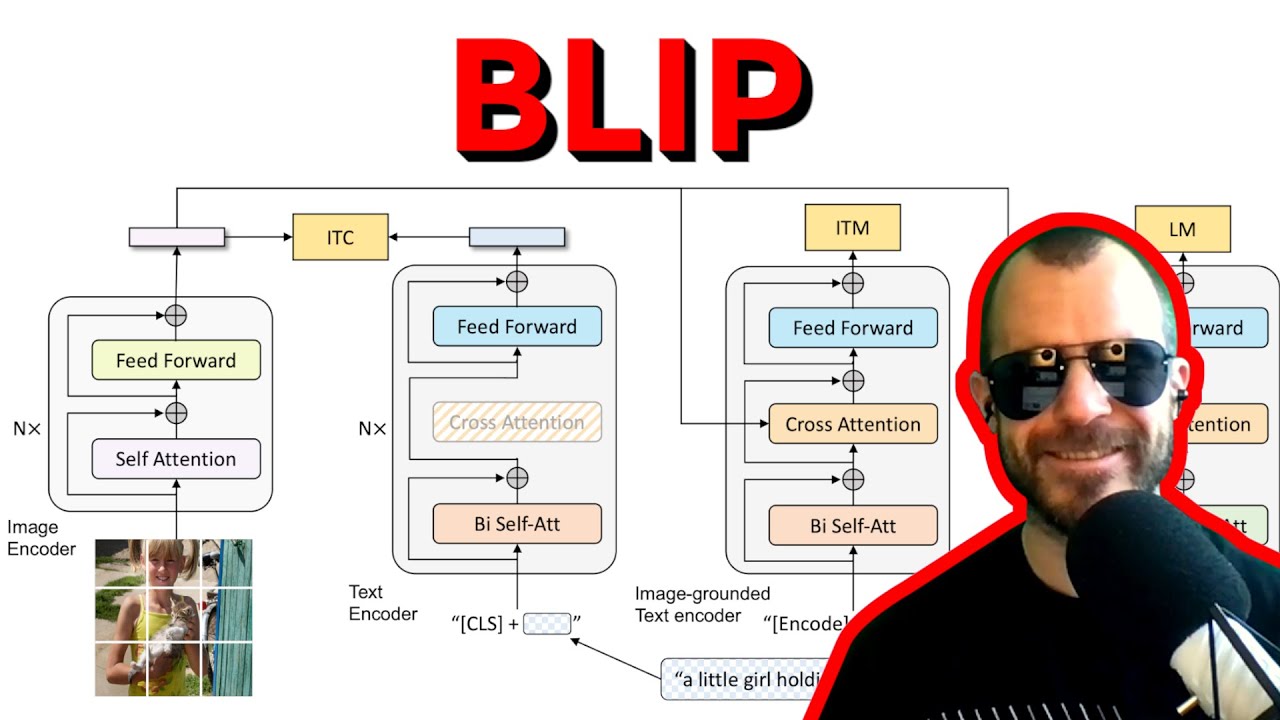

BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding&Generation - YouTube

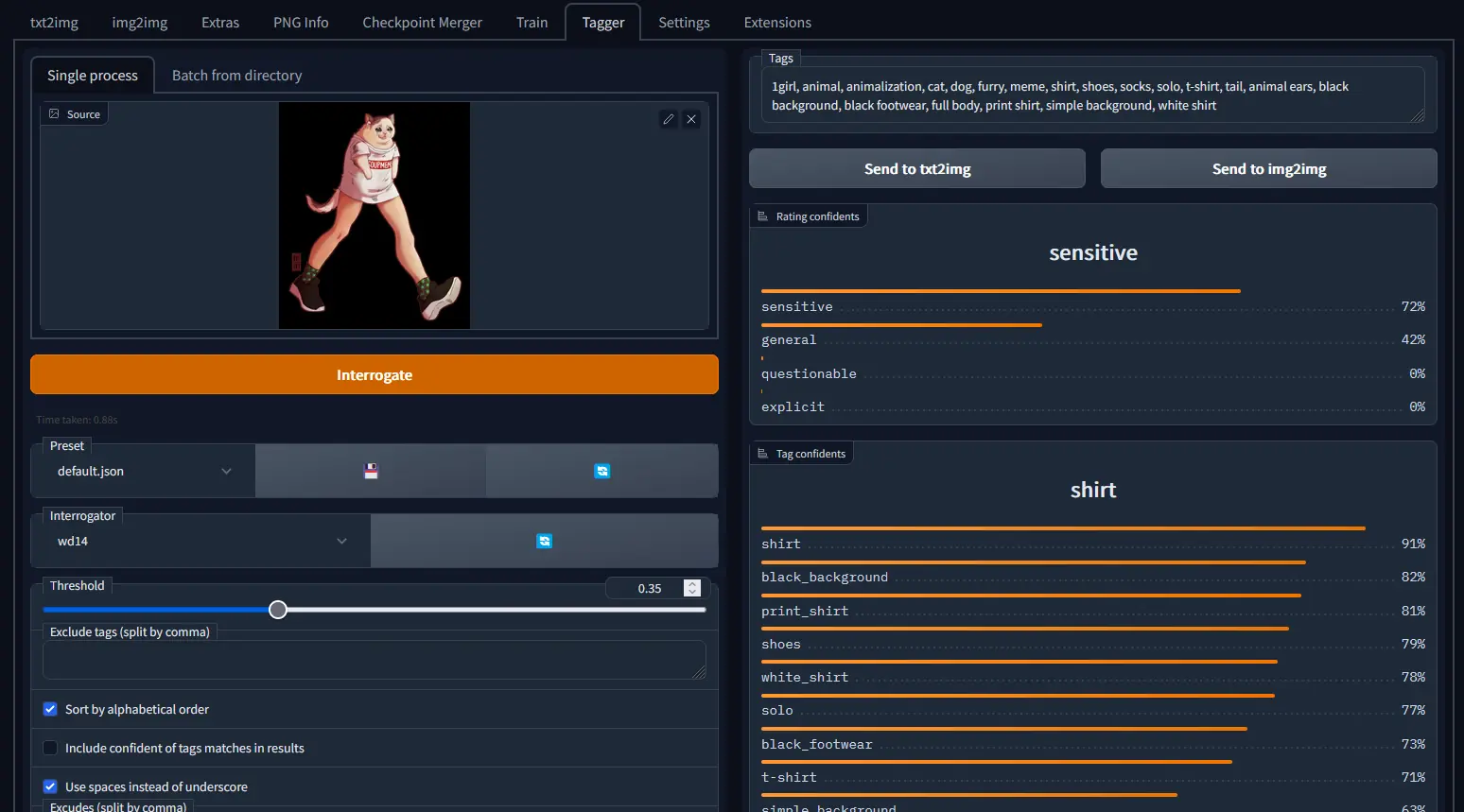

How to use ``CLIP interrogator'' that can decompose and display what kind of prompt / spell was from the image automatically generated by the image generation AI ``Stable Diffusion'' - GIGAZINE

![2301.12597] BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models 2301.12597] BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models](https://ar5iv.labs.arxiv.org/html/2301.12597/assets/x1.png)

2301.12597] BLIP-2: Bootstrapping Language-Image Pre-training with Frozen Image Encoders and Large Language Models

Paper Summary: BLIP: Bootstrapping Language-Image Pre-training for Unified Vision-Language Understanding and Generation | by Ahmed Sabir | Medium

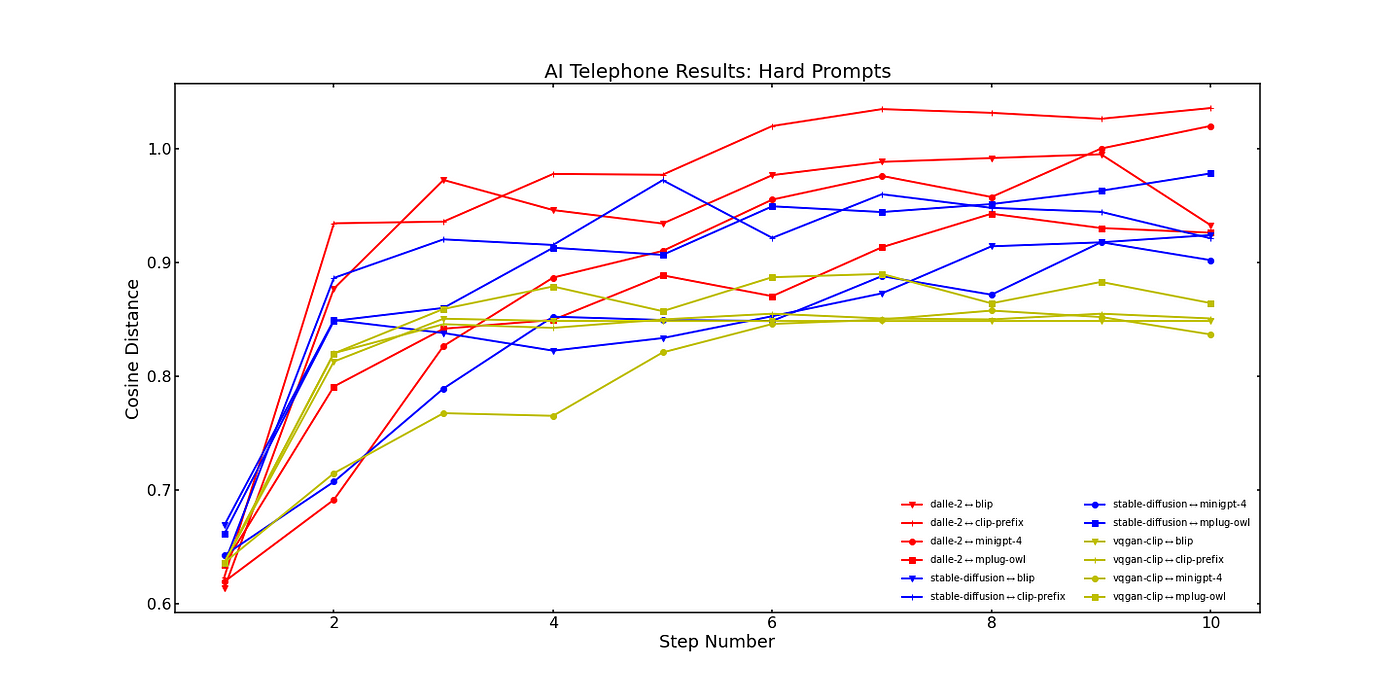

![🎯 [LB: 0.45836] ~ BLIP+CLIP | CLIP Interrogator | Kaggle 🎯 [LB: 0.45836] ~ BLIP+CLIP | CLIP Interrogator | Kaggle](https://user-images.githubusercontent.com/45982614/220214422-19529ba3-9c13-40cd-a3a6-434785002974.png)

![2209.09019] LAVIS: A Library for Language-Vision Intelligence 2209.09019] LAVIS: A Library for Language-Vision Intelligence](https://ar5iv.labs.arxiv.org/html/2209.09019/assets/x2.png)