OpenAI's unCLIP Text-to-Image System Leverages Contrastive and Diffusion Models to Achieve SOTA Performance | Synced

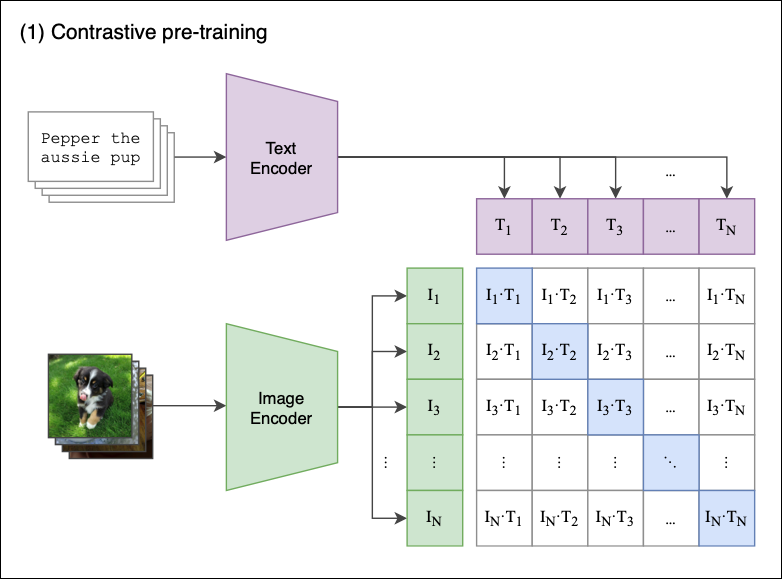

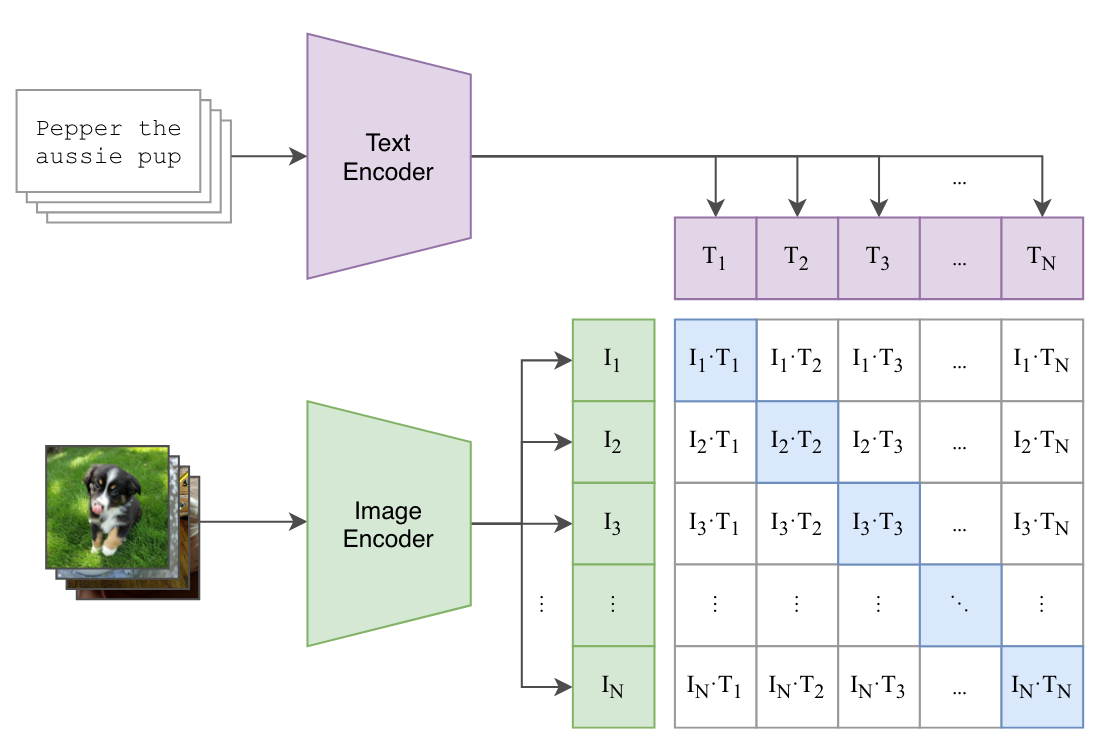

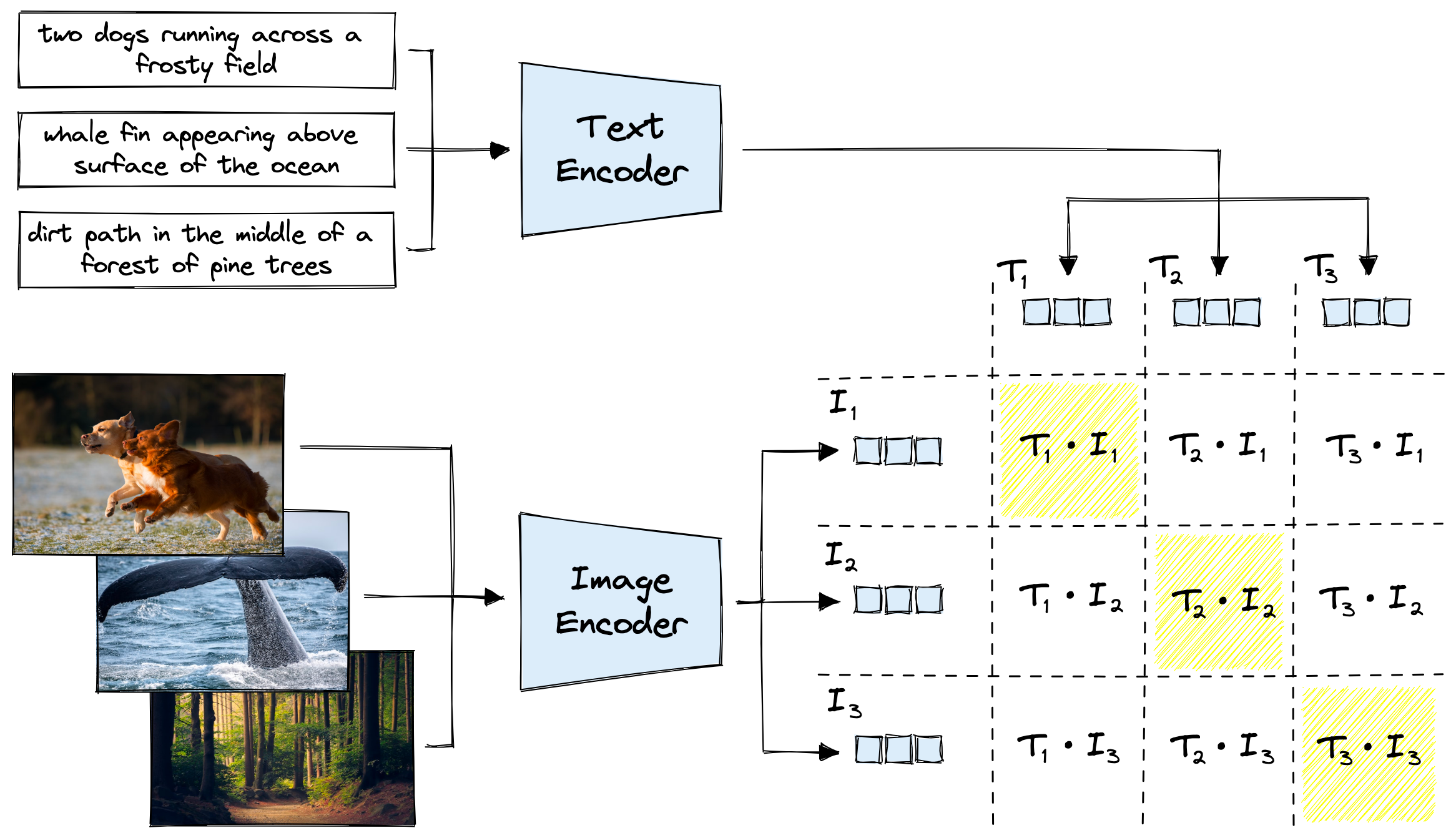

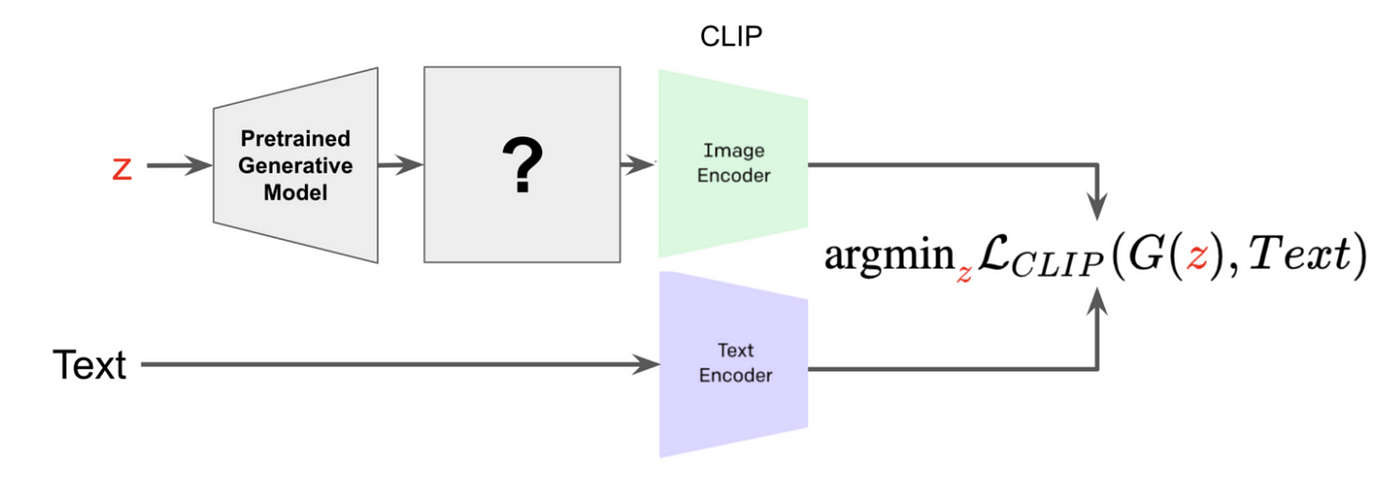

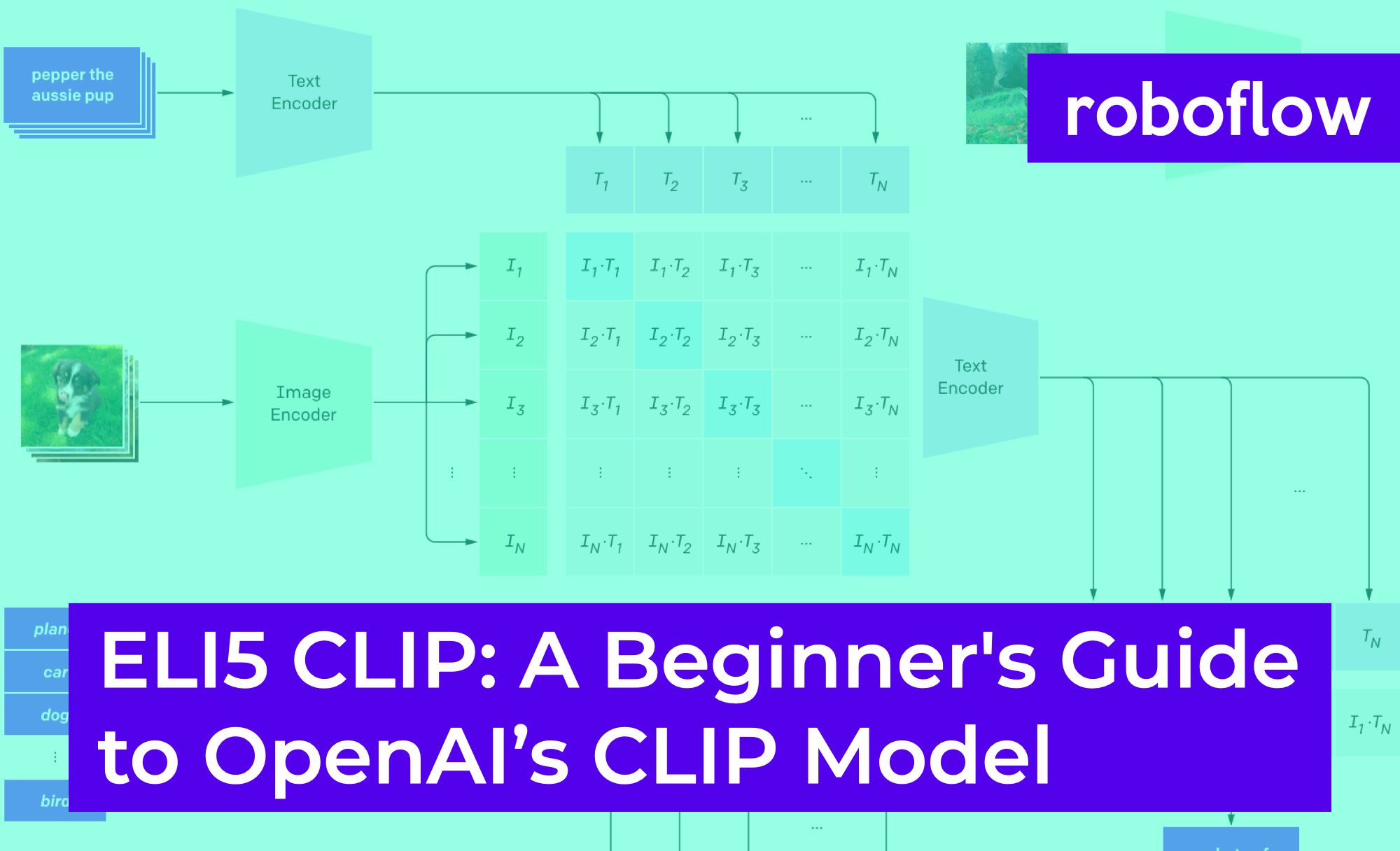

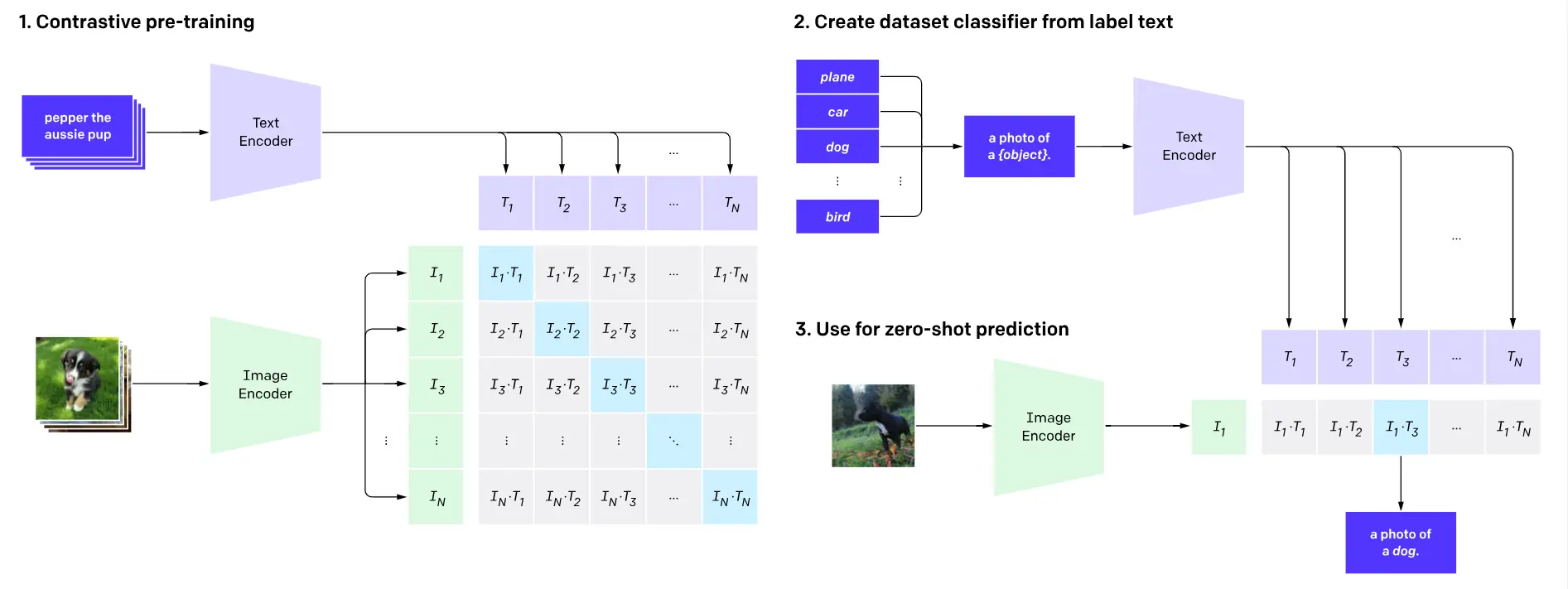

Example showing how the CLIP text encoder and image encoders are used... | Download Scientific Diagram

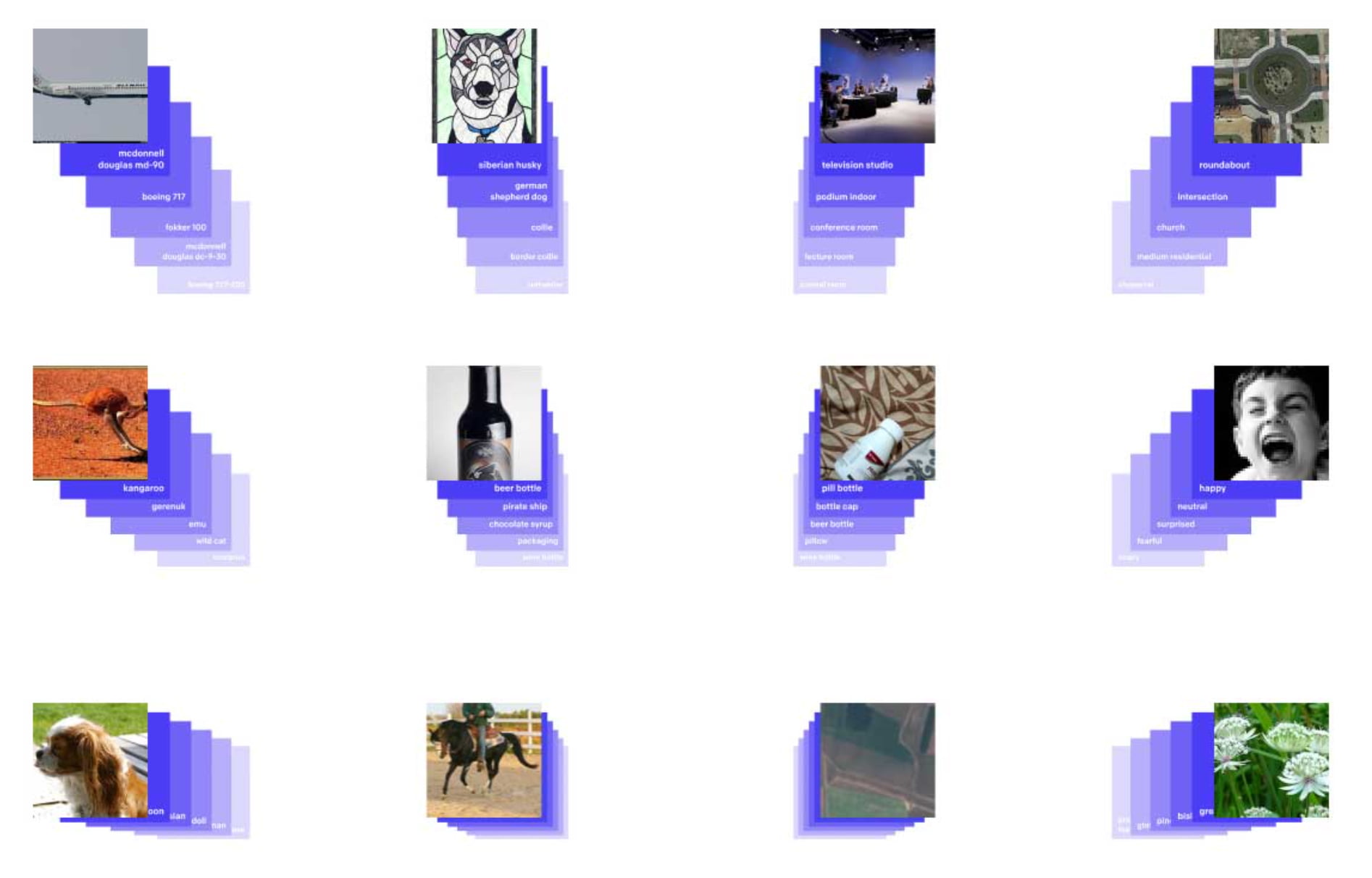

OpenAI's CLIP Explained and Implementation | Contrastive Learning | Self-Supervised Learning - YouTube

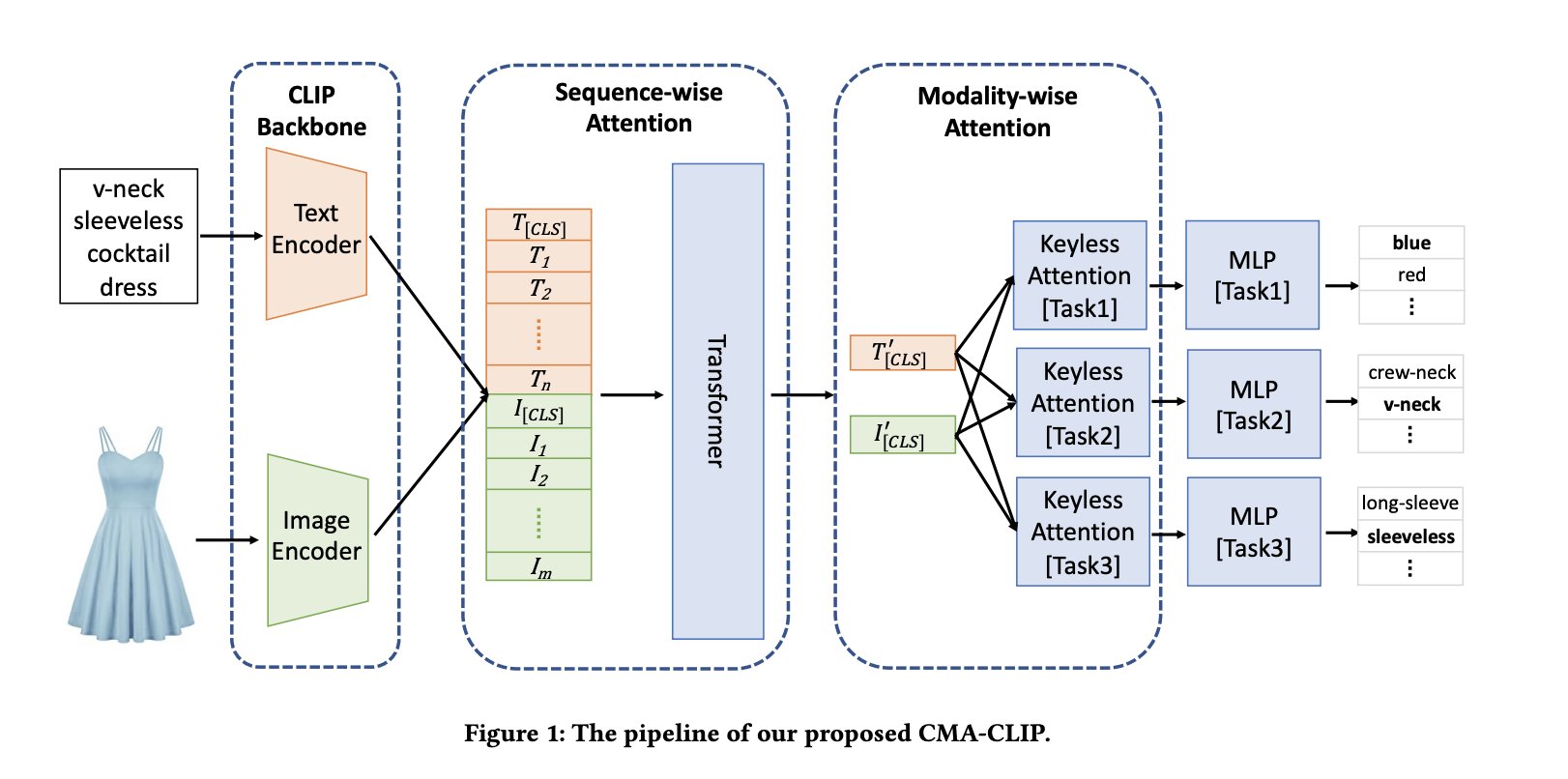

AK on X: "CMA-CLIP: Cross-Modality Attention CLIP for Image-Text Classification abs: https://t.co/YL9gQy0ZtR CMA-CLIP outperforms the pre-trained and fine-tuned CLIP by an average of 11.9% in recall at the same level of precision

Meet 'Chinese CLIP,' An Implementation of CLIP Pretrained on Large-Scale Chinese Datasets with Contrastive Learning - MarkTechPost

Romain Beaumont on X: "@AccountForAI and I trained a better multilingual encoder aligned with openai clip vit-l/14 image encoder. https://t.co/xTgpUUWG9Z 1/6 https://t.co/ag1SfCeJJj" / X