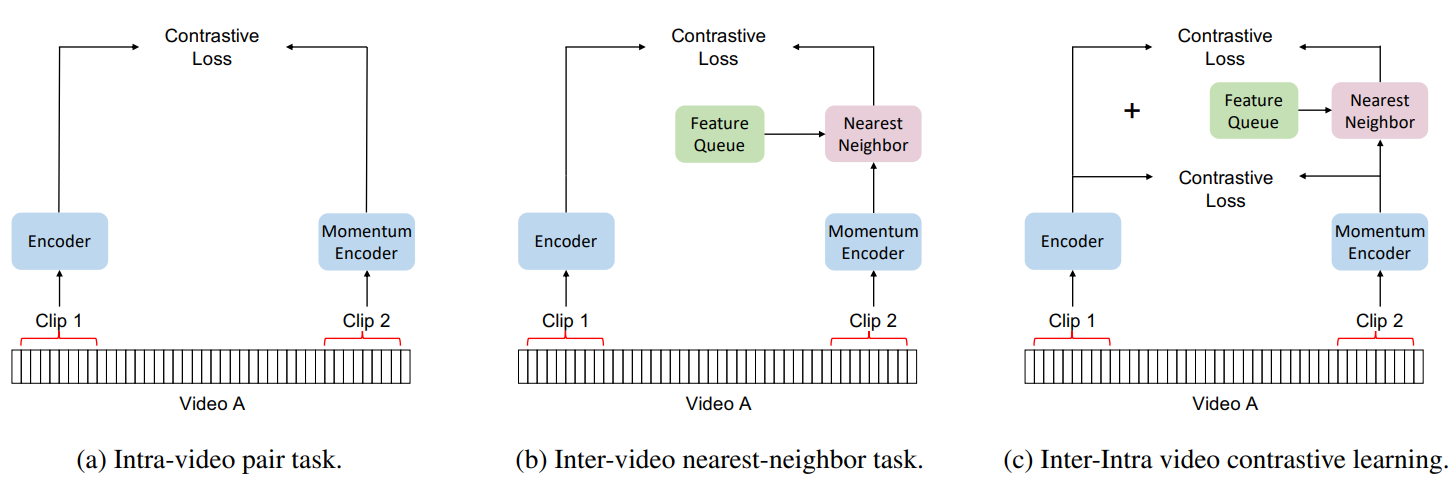

Paper reivew | VideoCLIP: Contrastive Pre-training for Zero-shot Video-Text Understanding - Datahunt

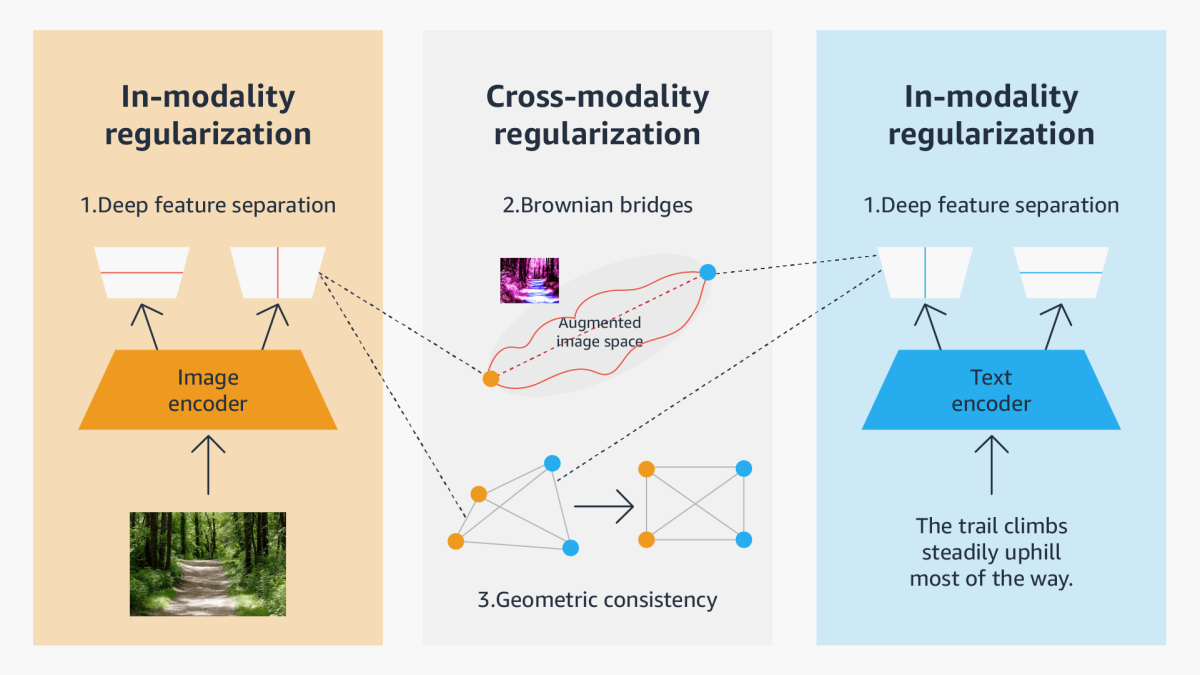

![PDF] X-CLIP: End-to-End Multi-grained Contrastive Learning for Video-Text Retrieval | Semantic Scholar PDF] X-CLIP: End-to-End Multi-grained Contrastive Learning for Video-Text Retrieval | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/1ec886e2235763b08fa606a5d5ea3f4540f715ec/4-Figure2-1.png)

PDF] X-CLIP: End-to-End Multi-grained Contrastive Learning for Video-Text Retrieval | Semantic Scholar

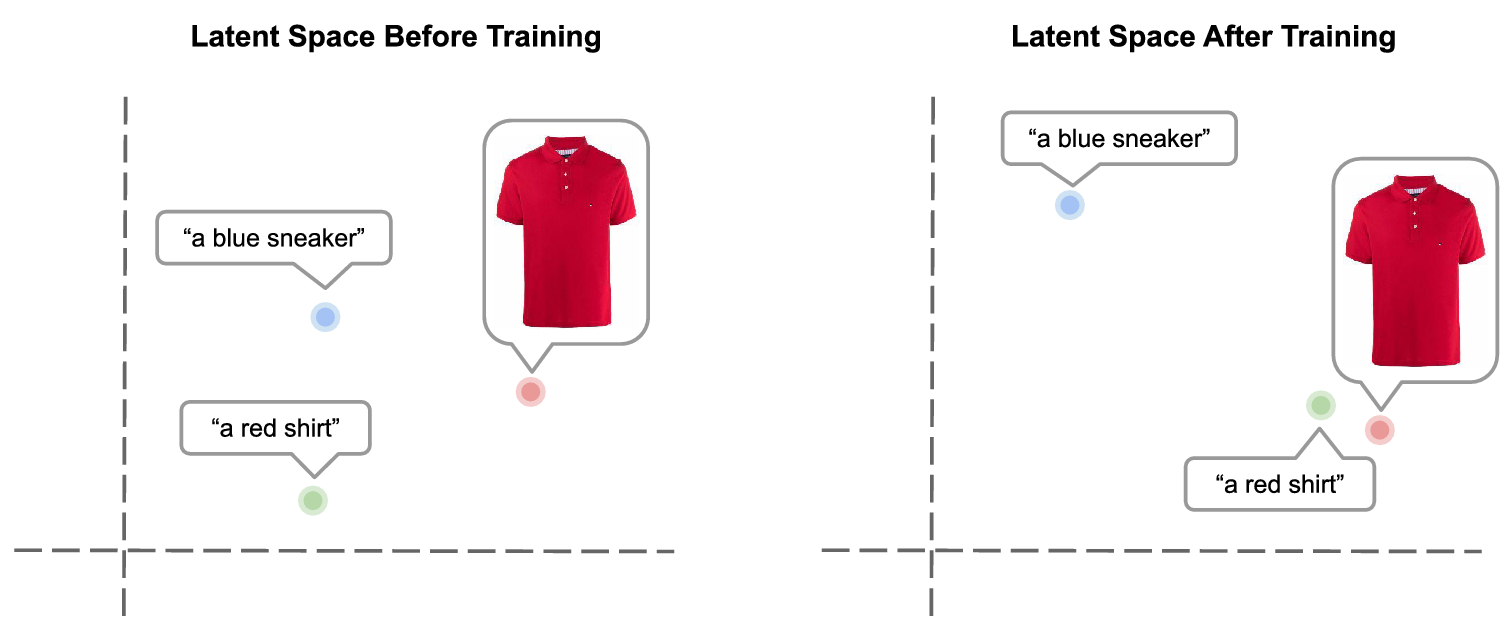

![2203.02053] Mind the Gap: Understanding the Modality Gap in Multi-modal Contrastive Representation Learning 2203.02053] Mind the Gap: Understanding the Modality Gap in Multi-modal Contrastive Representation Learning](https://ar5iv.labs.arxiv.org/html/2203.02053/assets/x1.png)

2203.02053] Mind the Gap: Understanding the Modality Gap in Multi-modal Contrastive Representation Learning

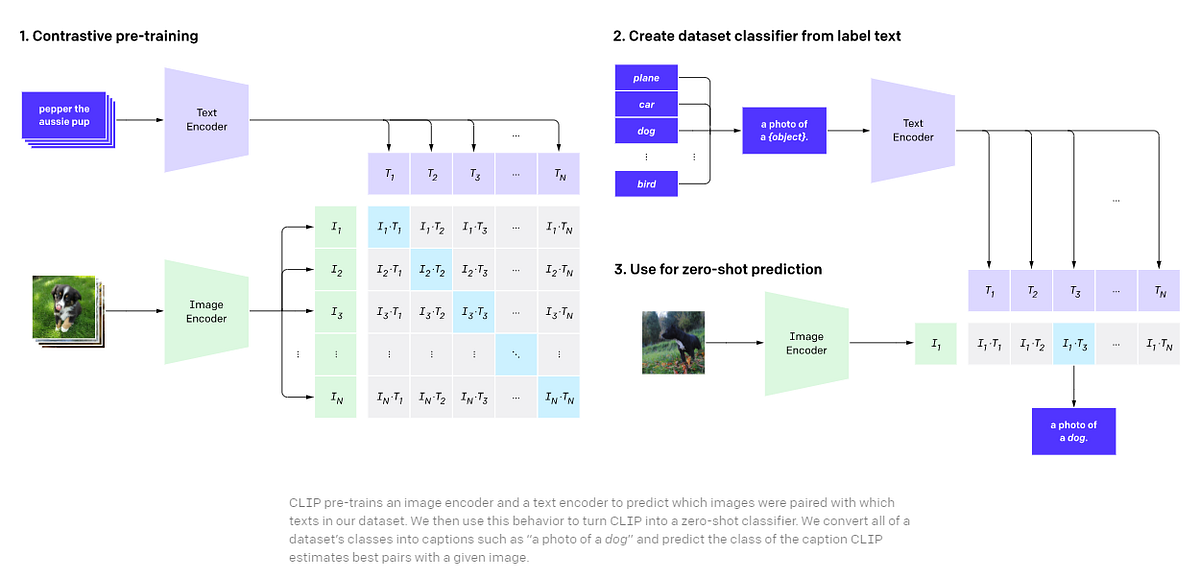

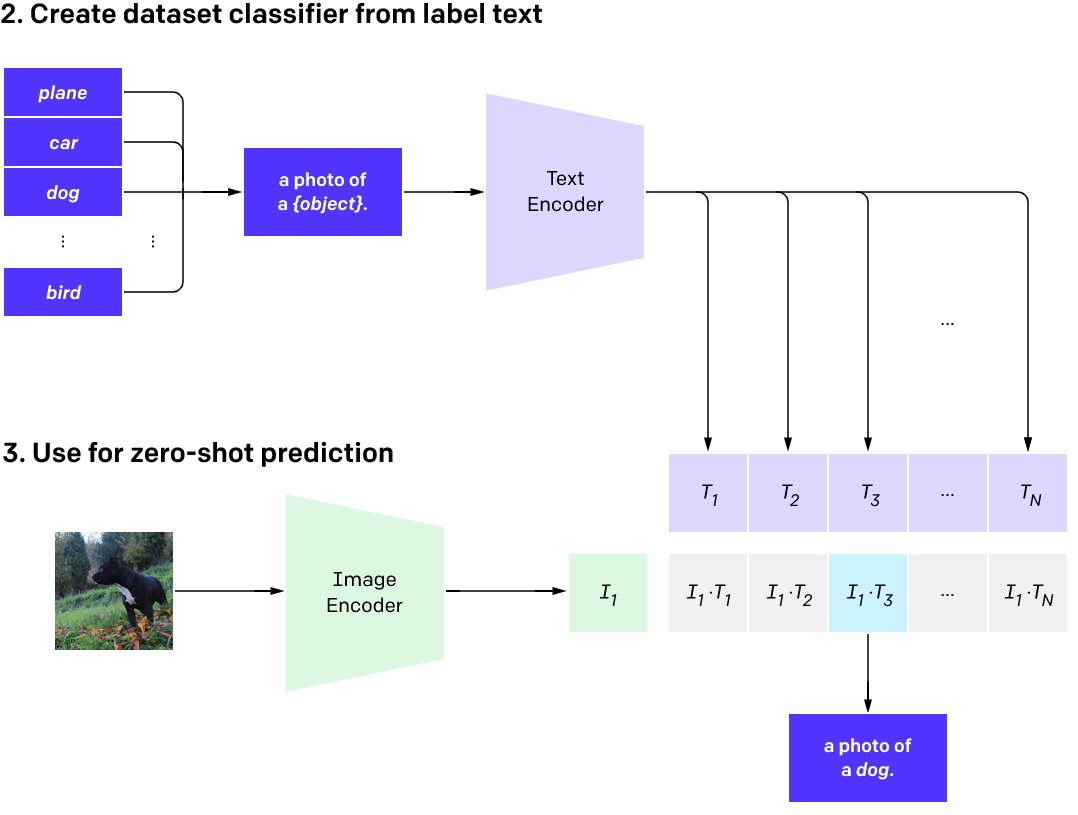

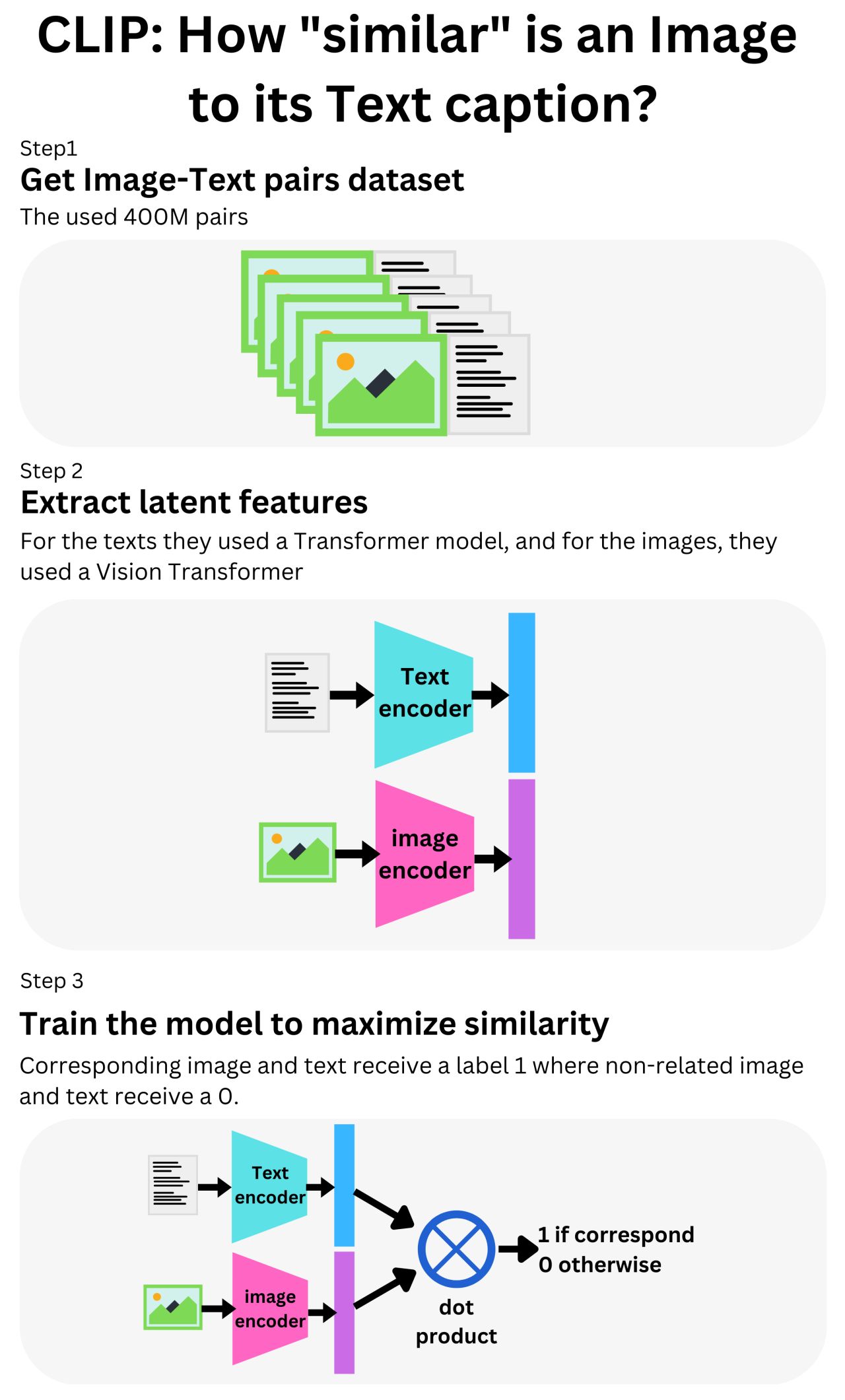

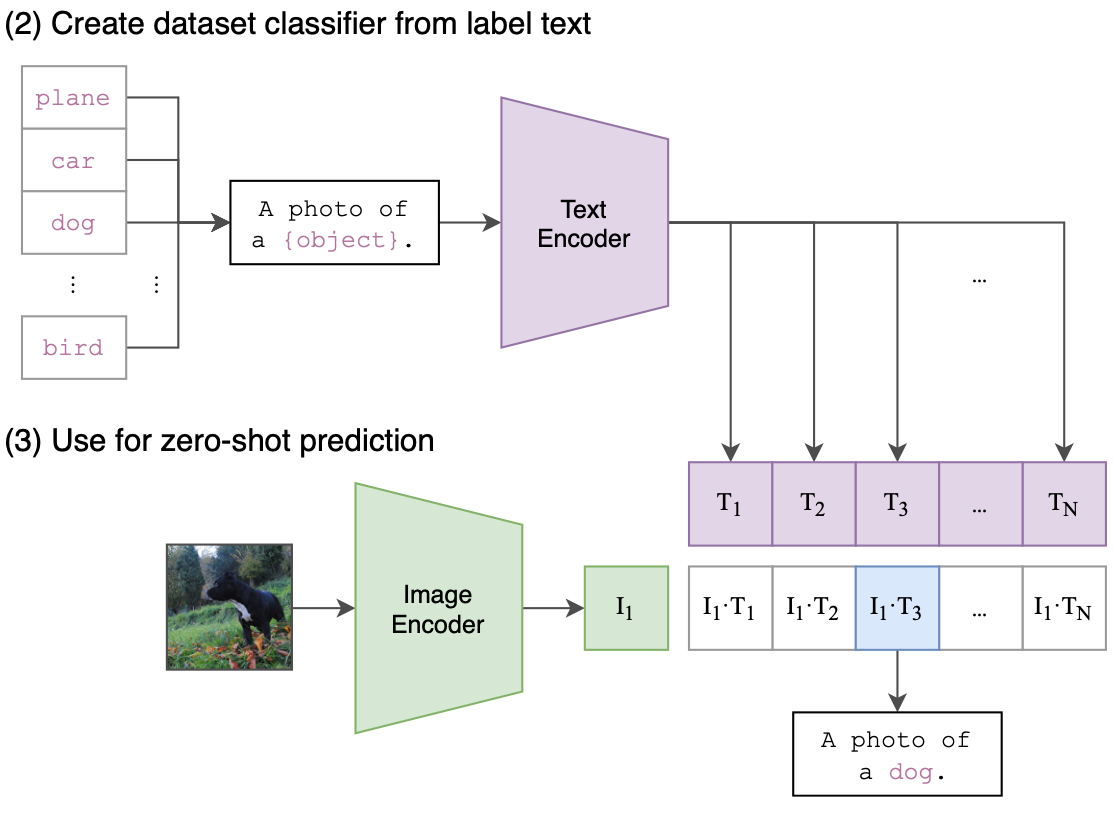

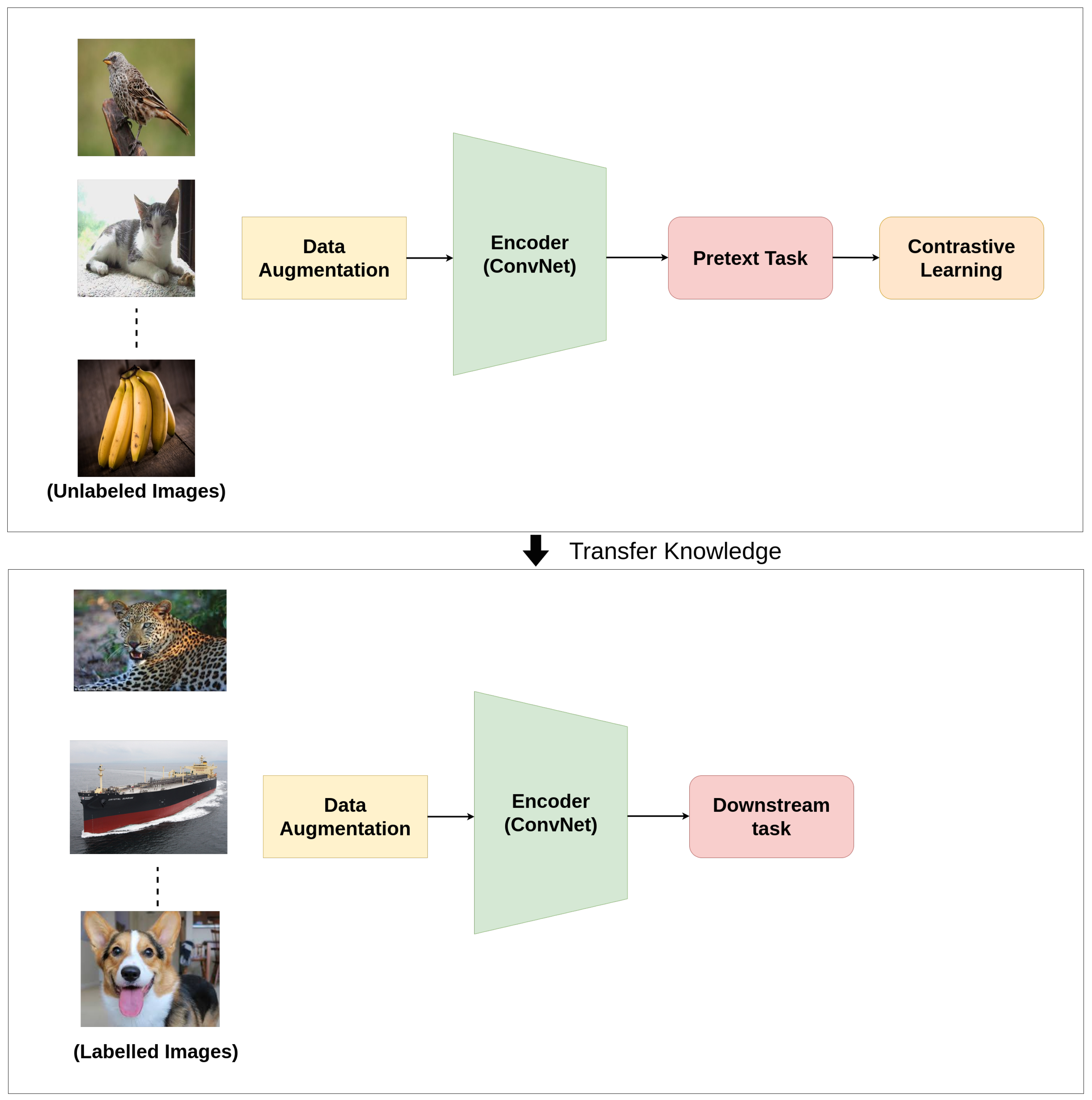

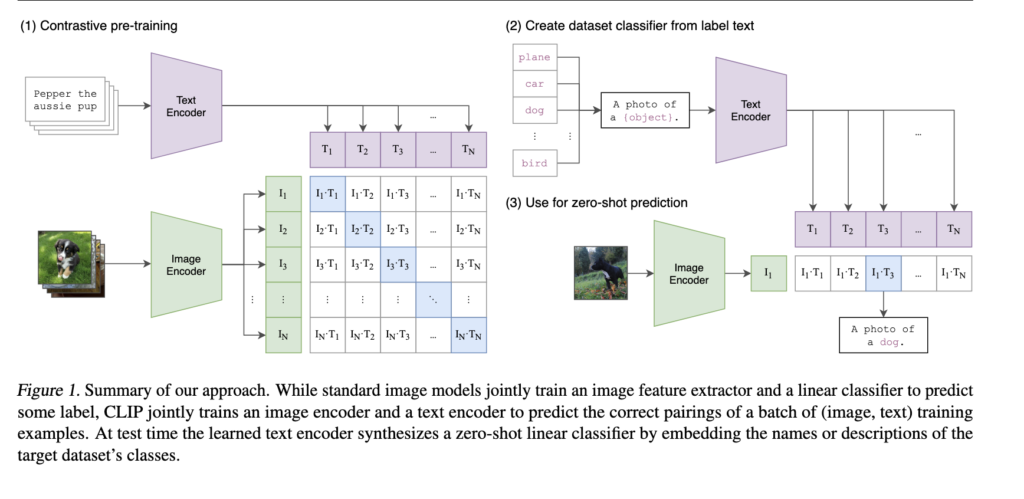

The network architecture of Contrastive Language-Image Pre-Training (CLIP). | Download Scientific Diagram

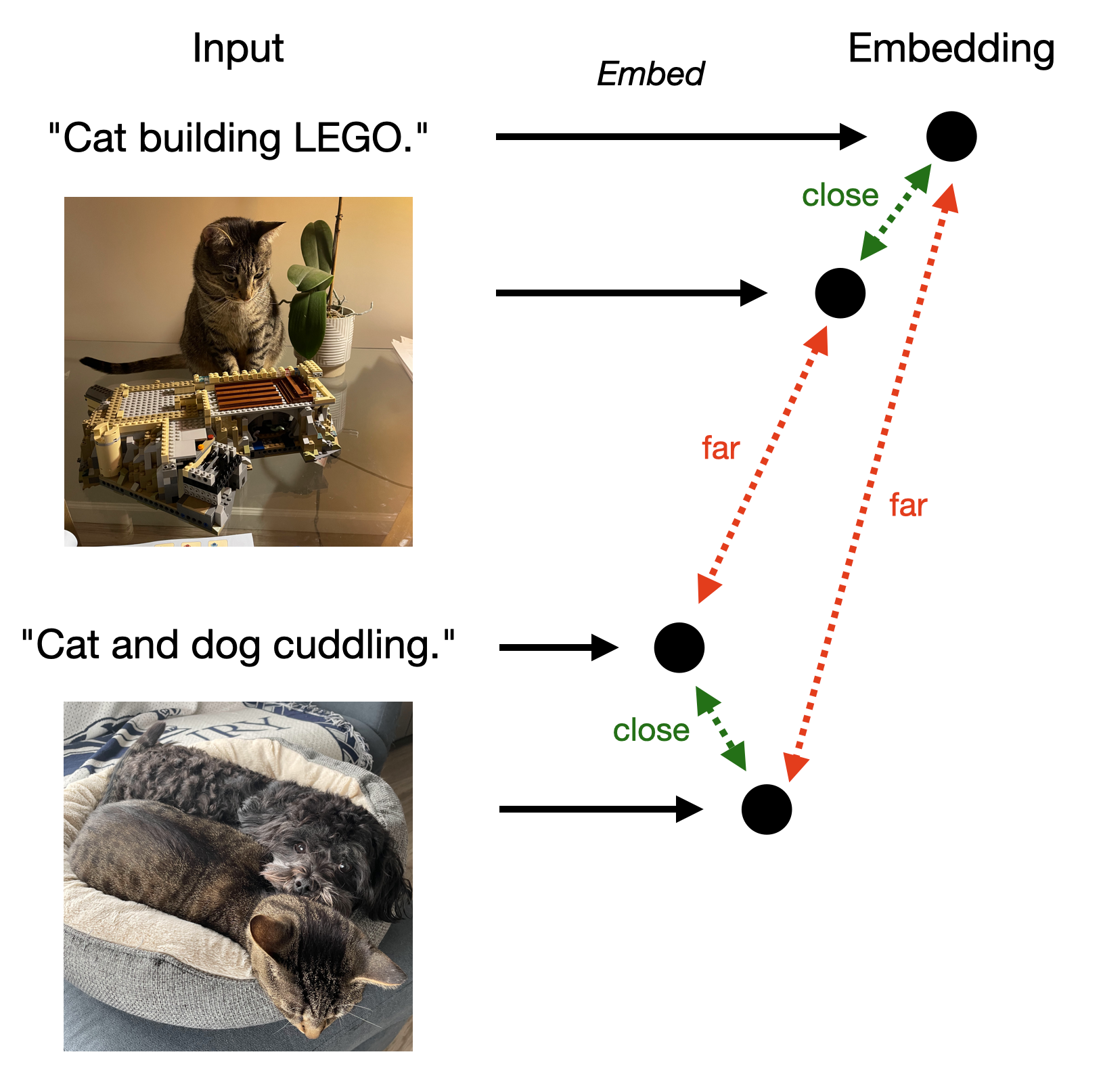

Understand CLIP (Contrastive Language-Image Pre-Training) — Visual Models from NLP | by mithil shah | Medium

GitHub - openai/CLIP: CLIP (Contrastive Language-Image Pretraining), Predict the most relevant text snippet given an image

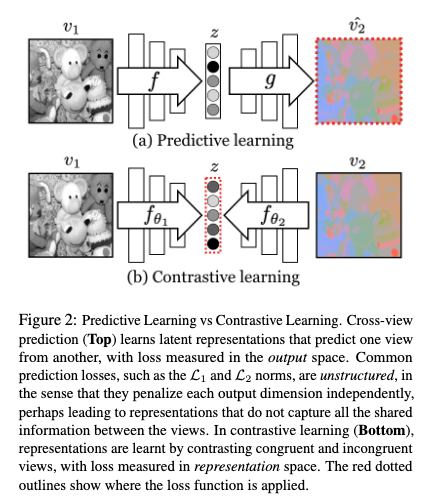

![2204.14244] CLIP-Art: Contrastive Pre-training for Fine-Grained Art Classification 2204.14244] CLIP-Art: Contrastive Pre-training for Fine-Grained Art Classification](https://ar5iv.labs.arxiv.org/html/2204.14244/assets/x1.png)